Table of Contents

AI Transformation Is a Governance Challenge in the United States

Out front, artificial intelligence shifts business practices nationwide. Healthcare firms tap into smart systems just as much as banks do. Retail outfits streamline tasks while delivery networks tweak routes behind the scenes. Cyber defenses adapt in real time instead of waiting for human input. Marketing teams study behavior patterns without sifting through spreadsheets by hand. Efficiency climbs when machines handle repetitive jobs. Customer interactions grow smoother even if people never notice the tech underneath.

Most groups now see AI can do a lot of things well. Yet getting it right takes more than just tools. Often what stops progress comes down to how rules are set. Putting money into software happens fast. Clear guidelines, ways to check decisions, who answers for problems – these get ignored too much.

When AI grows stronger and slips into everyday tasks, how it’s managed starts shaping success or collapse. One wrong move, the whole shift wobbles. Power without oversight? That’s where things unravel fast.

Understanding AI Transformation

Success in AI shifts isn’t just about clever code or tech experts, some firms think. Tools matter, yet people who understand change often shape outcomes more. A strong platform helps, still, knowing how teams adapt makes a difference. Clever algorithms exist everywhere, however, real movement comes from daily choices inside offices. Software runs systems, but habits steer results behind the scenes.

Still, top-tier AI might stumble if oversight slips.

Still, without clear rules, even smart tools can cause harm. How we manage them shapes their impact more than speed or power ever could.

This can include:

- automated customer support systems

- predictive analytics platforms

- AI-powered recommendation engines

- workflow automation tools

- intelligent data analysis systems

- generative AI applications

Some companies across America now use artificial intelligence just to keep up in a shifting online world. Yet when these tools lack solid oversight, they risk causing deep issues in how work gets done – along with moral concerns that weren’t there before.

Why Governance Matters in AI Adoption

- Out of nowhere, AI shapes big choices companies make. When it comes to who gets hired, machines often play a role behind the scenes. Loan approvals? Often nudged by silent algorithms watching numbers. Medical advice sometimes flows through software patterns, not just doctors’ notes. Security alerts rise or fall based on automated triggers scanning threats. Even how firms talk to customers shifts when bots step in quietly.

This is why clear rules matter so much for groups working together – structures must lay out - who is responsible for AI decisions

- how data is managed

- how risks are monitored

- how compliance is maintained

- how transparency is ensured

- how ethical concerns are addressed

Without proper governance, AI systems may create misperception, security risks, legal experience, and reputational harm.

AI Adoption Growth in the United States

The United States remains one of the world’s leading markets for artificial intelligence adoption.

AI Adoption Among U.S. Businesses

| Year | Estimated Business AI Adoption |

|---|---|

| 2022 | 35% |

| 2024 | 58% |

| 2026 | 78% (projected) |

The rapid increase in AI usage also increases the need for stronger governance structures.

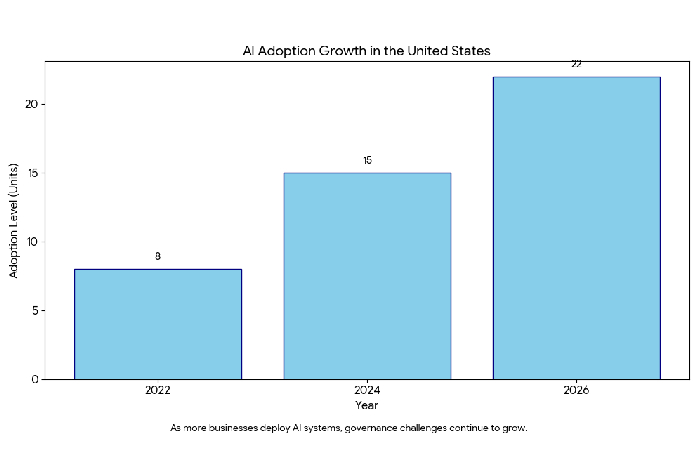

AI Adoption Growth Chart

AI Adoption Growth in the United States

As more businesses deploy AI systems, governance challenges continue to grow.

Common Issues in Managing AI Change

-

Unclear Accountability

Who takes blame when AI fails? That question shapes much of the struggle around its oversight.

When artificial intelligence causes harm, it’s tough for companies to pin down who’s responsible.

What do people often ask? Stuff like:

Who approved the AI system?

Someone had to watch how it worked.

Who is responsible for errors or bias?

Who holds the weight – those building the tools or those guiding the vision? Could it be both, yet neither fully?

When rules are not clear, it’s difficult to know who should take responsibility.

-

Shadow AI Risks

Working quietly outside the rules, many staff rely on artificial intelligence apps their companies never signed off on.

People sometimes refer to this behavior as shadow AI

Hidden artificial intelligence might lead to big issues, such as:

unauthorized data sharing

privacy breaches

security vulnerabilities

uncontrolled automation

These days, worries grow inside U.S. companies when staff turn to open AI systems while handling private work data. Some bosses fear leaks might happen if secrets travel through online platforms not built for corporate shields. Quiet tension rises as more workers rely on outside tech that doesn’t lock down details like internal software does. Behind closed doors, managers debate whether convenience outweighs unseen risks tied to sharing files with global servers. Trust slips when confidential plans pass through apps owned by distant corporations.

-

Data Privacy Concerns

Most times, artificial intelligence needs loads of information to work right. When rules around handling that info are weak, risks to personal safety grow fast.

Potential risks include:

Customer data seen by others who should not have access

misuse of sensitive data

non-compliance with regulations

unauthorized access to confidential records

When privacy rules shift across America, companies find ways to manage information carefully. Though regulations change often, staying clear on data use becomes necessary. Because laws adapt over time, handling personal details responsibly matters more. Even small missteps lead to bigger problems down the road. Where compliance is weak, risks grow without warning. Since trust depends on transparency, how firms treat data shows their priorities. As each state passes new measures, adjusting systems stays unavoidable.

-

Bias and Ethical Challenges

When faulty information is used to teach machines, skewed results might quietly emerge. A tilt in training material often nudges artificial minds off neutral ground. Feeding uneven examples can silently push decisions toward unfair patterns. Mistakes buried in data may nudge responses without anyone noticing. If the foundation wobbles, what’s built above it leans too.

Examples include:

biased hiring recommendations

unfair lending decisions

unequal healthcare assessments

Mistakes might slip through when nobody is watching closely, opening doors to lawsuits while quietly eroding confidence. People start questioning motives once safeguards fade into the background.

-

Complex Regulatory Environment

Few rules tie the whole country together when it comes to artificial intelligence. Each state moves on its own, without one shared system guiding them all.

Instead, businesses must navigate:

federal regulations

state-level privacy laws

industry-specific compliance rules

Facing different rules in each state makes oversight tougher for groups working in more than one location.

AI Governance Challenges

| Governance Problem | Possible Impact |

|---|---|

| Weak accountability | Legal disputes |

| Poor oversight | Operational failures |

| Shadow AI usage | Security risks |

| Biased AI outputs | Reputation damage |

| Weak compliance systems | Financial penalties |

Why Technology Alone Is Not Enough

Success in AI shifts isn’t just about clever code or tech experts, some firms think. Tools matter, yet people who understand change often shape outcomes more. A strong platform helps, still, knowing how teams adapt makes a difference. Clever algorithms exist everywhere, however, real movement comes from daily choices inside offices. Software runs systems, but habits steer results behind the scenes.

Still, top-tier AI might stumble if oversight slips.

Still, without clear rules, even smart tools can cause harm. How we manage them shapes their impact more than speed or power ever could.

Without governance:

- risks increase

- oversight weakens

- compliance problems grow

- public trust declines

Successful AI transformation requires both innovation and responsible management.

Technology vs Governance

| Technology Focus | Governance Focus |

|---|---|

| AI development | Risk management |

| Automation speed | Accountability |

| Data processing | Data protection |

| System performance | Ethical oversight |

| Innovation | Regulatory compliance |

Leadership’s Role in AI Governance

Corporate leadership plays a major role in successful AI transformation.

Executives and board members need to understand:

- AI-related risks

- regulatory obligations

- ethical responsibilities

- governance strategies

Organizations that lack leadership involvement often struggle to manage AI risks effectively.

Strong leadership helps ensure that governance remains part of long-term business strategy.

Core Elements of an AI Governance Framework

Businesses need structured governance systems to manage AI responsibly.

Important Components Include:

Risk Evaluation

Before rolling out AI tools, groups need to check what could go wrong. Risk review matters when putting new tech into practice.

Human Oversight

High-risk AI decisions should involve human supervision.

Transparency Standards

How AI reaches choices needs laying out by companies. People deserve knowing what drives those outputs behind closed digital doors.

Monitoring Systems

Errors show up more clearly when systems keep checking themselves. Bias slips through less often under constant watch. Performance hiccups get spotted faster with steady observation.

Compliance Controls

Organizations must ensure AI systems follow applicable laws and policies.

AI Governance Structure

Board Leadership

│

Risk & Compliance Teams

│

AI Governance Officers

│

Data Management Teams

│

AI Monitoring Operations

Industries Facing Major AI Governance Challenges

Healthcare

AI systems are increasingly used for diagnosis and patient support.

Poor governance may affect patient safety and medical accuracy.

Finance

Financial institutions use AI for fraud detection and loan approvals.

Bias or errors can result in unfair financial decisions.

Human Resources

AI hiring tools may unintentionally favor or exclude certain candidates.

Cybersecurity

AI-powered automation can improve security operations but may also create new vulnerabilities if poorly managed.

The Rise of Autonomous AI Systems

One growing concern is the rise of autonomous or agentic AI systems.

These systems can perform tasks and make decisions with limited human involvement.

While they improve efficiency, they also increase governance risks because organizations may struggle to monitor autonomous decision-making processes.

Risks of Autonomous AI

| Risk | Description |

|---|---|

| Independent actions | AI acts without approval |

| Limited transparency | Decisions difficult to explain |

| Security exposure | Increased cyber risks |

| Compliance failures | Harder to monitor regulations |

Importance of Strong Data Governance

AI systems depend heavily on high-quality data.

Poor data governance can result in:

- inaccurate predictions

- biased outcomes

- security risks

- unreliable AI performance

Strong data governance improves accuracy, trust, and compliance.

Public Trust and AI Governance

Consumers are becoming more aware of how businesses use artificial intelligence.

People increasingly expect:

- fairness

- transparency

- ethical AI practices

- responsible data usage

Organizations with strong governance frameworks are more likely to build public trust.

Best Practices for Responsible AI Governance

Build Cross-Functional Teams

Include experts from:

- legal departments

- cybersecurity teams

- compliance divisions

- technology departments

Set Rules for AI Use

Clear guidelines on how staff may use artificial intelligence need setting by companies.

Train Employees

Knowing what can go wrong with AI matters for every team member. Rules around its use need clear attention from everyone involved.

Keep Watching AI Systems All the Time

Staying involved matters, especially once systems start running. Oversight slips if attention fades too soon. Even after launch, someone needs to keep watching. Long-term checks help catch issues early. Inaction later can undo careful planning at the start.

Maintain Detailed Documentation

When choices made by artificial intelligence are recorded, it becomes easier to review them later. Oversight grows stronger because each step leaves a trace behind.

AI Governance Maturity Levels

| Stage | Description |

|---|---|

| Beginner | Minimal governance |

| Developing | Basic oversight policies |

| Mature | Structured governance systems |

| Advanced | Continuous monitoring and auditing |

In the United States The Future of AI Governance

When machines grow keener, rules everywhere them must keep up. Still, progress means oversight can’t lag behind. Because tech moves fast, how we manage it matters just as much. Over time, guiding AI safely turns into a bigger deal. Every change pushes leaders to think harder about control.

Future trends may include:

stricter AI regulations

stronger compliance requirements

increased corporate accountability

higher expectations for transparency

When companies ignore governance, trouble follows – lawsuits pop up, workflows break down, people start talking behind closed doors.

Final Thoughts

Here, AI has moved beyond just gadgets. In towns and cities nationwide, it shapes choices, shifts blame, tests rules, shakes trust in how things run.

Most firms chasing innovation still overlook one thing: guardrails matter just as much. Progress without boundaries often leads nowhere safe. Structure builds confidence more than fast results ever could. Oversight isn’t paperwork – it’s what keeps clever systems honest. What you’re held accountable for weighs just as heavily as what you can actually do. The way things are set up steers actions far beyond personal aims.

Ahead lies success for firms moving on twin tracks – pioneering tools while honoring strong guidelines. More than pace counts; clarity does too – understanding data flows, decision rights. Oversight skips no phase – it anchors design early. Today’s moves mold future setups, making equity urgent now. Longevity grows not merely through scale, but via ethics stitched into every move.